Artificial intelligence has moved from experimentation to boardroom priority. Enterprises across finance, telecoms, and public sectors are racing to adopt AI-driven solutions — yet over 90% of proof-of-concept initiatives reportedly fail to reach production.

The gap is rarely about AI capability alone. More often, it lies in the disconnect between scattered areas of expertise along the path from high-level AI ambition to the realities of performance, resilience, and measurable execution.

To explore how AI’s technological promise can be “landed” into practical, high-impact use cases, we spoke with Yury Rassokhin, a senior AI infrastructure practitioner with over two decades of experience spanning high-performance computing, cloud, and artificial intelligence, who has led the architectural design of large-scale AI infrastructure deployments across EMEA, including foundational initiatives such as Etisalat that has since evolved into national-level AI platform in the UAE.

When did your fascination with IT begin?

It started very early. When I was 11 years old, I became curious not just about using software, but about how it truly works underneath.

One of my first experiments involved the classic game King’s Bounty. I discovered that by creating two save files differing only in the number of in-game weeks, I could compare their binary representations and locate where that week counter was stored. Once found, I could modify it directly – effectively bypassing the artificial 400-week limitation imposed by the game. Looking at the raw binary dump of that save file, I experienced an aesthetic appreciation of the hidden layers of software. Those binary structures were never meant to be seen by users, yet they formed an elegant system – and they revealed their logic to me. That was my first IT lesson – you tame IT systems by diving into their depths, not by scratching the surface.

And I gained another pivotal experience shortly afterward. I began writing a simple game on the ZX Spectrum, an unimaginably slow platform by modern standards, and I wanted the game’s protagonist to move smoothly in arbitrary directions, not just in the boring left-right-up-down pattern. However, calculating related trigonometric functions like sine and cosine in real time was prohibitively slow on that hardware. Instead of accepting the limitation, I found an optimisation. I precomputed a static table of cosine values for predefined angles at startup. With that change, the game would efficiently reuse precomputed values, and the character’s motion suddenly looked natural and fluid. That was my second IT lesson – performance analysis makes impossible possible through a creative approach.

These two lessons have followed me throughout my career.

How did you turn IT into a professional career?

As a university graduate in Mathematics and Computational Methods, I joined a startup company as an embedded software developer. The company implemented the ZigBee protocol and I developed an optimising translator from its domain-specific language into standard C.

My task was to fit the code of our ZigBee stack into a 16–32 KB system-on-a-chip, the less the better. As those applications were represented as loosely coupled communicating components, I came up with an internal representation of translated code as a global graph, where data paths and execution paths were unified in one model reflecting data dependencies. Back then, optimising compilers would analyse either execution flow or data flow, and my idea was a “data dependence graph” as I called it, which included semantics of both, but at a higher level of abstraction. This model reflected what the source code did to the data, not how it did it. This allowed me to find minimalistic forms of the code – the ones with a smaller memory footprint – while retaining data semantics as a fundamental invariant. For example, a C function may take 4 input arguments (“has a signature of 4 arguments”) and utilises them to return the resulting value. My approach examined whether the function could be redesigned – the signature included – using, say, 2 arguments of a compatible type – which, therefore, would halve the memory footprint of the signature. Transformations of a function signature are a very basic example, but it gives the idea – function signatures had been considered an invariant, and I broke this convention by shifting the invariant from a signature to an abstract data-dependence representation. That work later resulted in a peer-reviewed publication, reflecting my early interest in combining in-depth analysis with practical constraints. That was an inspiring head-start after university, and I knew I wanted to go deeper into the depths of IT solutions.

You later moved into high-performance computing. Why such a major shift?

At a glance, it looks counterintuitive. However, it was a calculated move. The point is that embedded systems and HPC share a fundamental paradigm – both push computing resources beyond what seems possible, the former by extreme scarcity, the latter by extreme scale. In both domains, success depends on understanding systems deep inside, at the most fundamental levels. It’s all about optimising data flows, execution paths, and memory access – so to say, success comes from squeezing every bit of capacity from the system.

I am genuinely grateful for my time in HPC. Throughout the years that followed, I made my way from Field Engineer to Chief HPC Architect, participated in major global HPC installations – including a system ranked #13 worldwide at the time of deployment – and gradually extended my experience to CEE, EMEA, ASEAN, and LATAM. At the same time, I developed expertise in Linux internals, system-level performance analysis, and large-scale data processing. This is what laid a solid foundation for my professional chapter in AI and data processing – which I have pursued since the beginning of the AI era.

How did you decide to move into cloud and AI?

That happened naturally. Over time, HPC embraced the cloud paradigm and started blending with cloud infrastructures. I took it as a paradigm shift and dove into the emerging HPC-as-a-Service domain. At that time, I solved IT challenges in enterprise in an unconventional way – I applied my HPC background. As enterprises were migrating to the cloud more and more, I found that the intersection of enterprise challenges with HPC techniques solved enterprise problems more efficiently than conventional enterprise approaches. Here are several examples of what I called HPC4Enterprise and made it my personal brand.

A FinTech company required environment-agnostic remote (synchronous) mirroring of unmodified database applications at minimal cost. Standard tooling would be either too expensive or too restrictive. I made it possible by adopting the HPC file system GlusterFS with kernel-level tuning leveraging robust, high-performing networking capabilities of a cloud hyperscaler. This approach maintained a mirrored secondary facility of the banking services at virtually no extra cost, and it was operating-system-agnostic with high sustained storage performance. Key to success was connecting domains normally disconnected – enterprise workload, HPC storage architecture, and cloud infrastructure. As I let these areas intersect, a new solution was born and later adopted for cloud infrastructure.

Another company, a provider of VDI services, faced an issue of workload spikes. They provided services to their clients using a pool of virtual machines. The problem was intra-day spikes influenced by the schedule of their target audience. 5% of the time their pool suffered from service delays and was overloaded, and the remaining 95% of the time the pool would stay underutilised. Obviously, procuring extra capacity would make the underutilisation issue even worse. When I analysed the case, I again let the HPC world intersect with their enterprise world – I suggested that we further developed their storage subsystem to leverage Linux-based in-RAM storage technology. In the Linux world, it was well-known. However, it came as a surprise to the company. Of course, it was easier said than done as we had to properly integrate several storage layers of substantially different natures to make up a ubiquitous namespace. It had to be a parallel storage shared across virtual machines as one, and scalable on demand. At the same time, it had to efficiently involve local RAM as a hot tier using the BRD driver in the Linux kernel. Finally, we designed a balanced integration, and the company enjoyed 22× acceleration of their services at no extra cost. The first thing they said was: “That’s impossible!” Yet impossible became real once we connected areas of expertise creatively. That architecture design was later publicised as well.

As the next tectonic shift, AI arrived in the industry – and it took a seat on HPC and cloud as its underlying stack. Simply put, AI runs on cloud infrastructure, and it uses HPC technologies to run efficiently. I took it as a pivotal point in history, and did my best to become part of it early on.

You have worked with enterprises for a long time. What industry challenge do you see in the context of AI?

I realized AI technology persistently suffers from fragmented knowledge. In fact, this problem goes beyond AI even, and you could have noticed the fragmentation pattern in the examples we just touched. Enterprise and HPC areas evolve but remain disconnected — enterprise gets stuck with a challenge with no feasible solution — if we let the enterprise and HPC collide, an efficient solution comes in.

This is what I call a fragmented knowledge problem – while two knowledge domains remain disconnected, both are missing out on added value. And vice versa, as we collide the domains, their collision generates added value that otherwise would have remained undisclosed to either domain. Apparently, this challenge goes far beyond the enterprise and HPC pair. In particular, I have found AI being impacted by fragmentation of knowledge more heavily than any other domain.

Can you give an example of the fragmented knowledge in the AI domain?

AI-driven projects provide such examples in abundance. I’ll guide you through an illustration based on my actual work. Let’s consider a commercial bank on a mission to adopt AI. Perhaps the bank starts from the Legal & Compliance department – namely, they aim to monitor and analyse all the transactions and contracts passing through, in real time. This looks like an unambiguous use case from the AI domain – and it looks impressive at a high level.

As we plan its execution, however, the gap between high-level technology promise and practical circumstances opens up with questions coming from “ground zero”. How do we select an AI model for specific constraints of the bank? What are the selection parameters? In particular, how does response latency depend on storage, memory, CPU, GPU, and network architecture? Which of those are crucial and which ones can be sacrificed? And finally, how do we quantify our choices in a verifiable way for stakeholders? This final question is vitally important because the C-level requires quantified, verifiable, and reproducible data for decision-making. At that point, the bank got stuck.

As I stepped in, I suggested they conduct performance benchmarking of the candidate implementations of AI to have solid numbers for decision-making on hand. Benchmarking-based decision-making had been a well-developed practice in the HPC domain – much less so in the enterprise domain to my surprise. So, the root cause of the initial delay in AI strategy was fragmentation of knowledge – namely, disconnection of the performance benchmarking and enterprise areas.

As the next step, the bank aims for service continuity requirements – meaning a data centre failure must not affect AI services being provided. This looks like a straightforward HA/DR domain. However, the HA/DR domain is disconnected from the AI domain at the bank – the bank knows how to run AI; and the bank knows how to implement HA/DR as well. Truth is, the bank needs neither. What they really need is HA/DR implemented with respect for AI specifics. How do you implement HA/DR for AI service without impacting performance of the underlying storage – and without eventually impacting performance of AI? It is vitally important to keep storage for AI at its optimal performance to fully utilise AI for the sake of effective ROI. This is where the bank finds out their AI and HA/DR areas of knowledge remain largely disconnected. Now they need to overcome fragmentation of knowledge by colliding these areas and generating a solution.

As you see, I started this example from a seemingly obvious AI use case and we ended up with a repetitive pattern of a challenge. It’s either vertical fragmentation of knowledge between high-level messages (“AI can do legal checking for you”) and ground zero where technology meets practical constraints – or horizontal fragmentation of knowledge where a knowledge domain lacks added value that could have been brought through collision with other domains.

The AI industry demonstrates fragmented knowledge very often, especially vertical fragmentation. According to statistics, over 90% of AI proof-of-concept projects fail to reach production.

And how do you approach solving these challenges?

As the root issue is disconnected areas of knowledge, my “know-how” was to conduct research to identify missing domains and connect them. Let’s get back to the example of our bank’s AI strategy. Their “AI-can-do-legal-analysis” message needed a quantified foundation. I researched what domains were missing out. First, performance benchmarking – I got such tools in HPC, Linux, and the open-source world. Second, I needed more than generic benchmarking – rather, I needed a tool to draw a complete picture of a performance landscape of the AI system. Further research referred to “sweep analysis” methodology that had been developed in physics and chemistry. This methodology assumes that a target system is characterized by a finite set of variable parameters, and the state of the system is a function of these parameters. Then the benchmarking “sweeps” over all viable combinations of the values of parameters monitoring the target function. As a result, we obtain what is called a “heat map” of the system in action, and we conclude where the parameters create sweet spots or bottlenecks in the performance of the system. I connected fragmented domains by adopting this approach for the benchmarking of IT systems, AI solutions included.

First of all, I translated this idea to rigorous math terms. Effectively, I needed to compose a multidimensional space as a Cartesian product of dimensions represented by variable parameters of a system. The target metrics of the system – such as performance – would become functions on the Cartesian space. Finally, I needed this model to flexibly integrate with any IT system and be able to fetch any parameters whatsoever. I made it happen as a new computing engine for operations on multidimensional Cartesian spaces, known as Flex Cartesian.

The new engine immediately made our lives easier as it allowed me to “set it and forget it” on the AI prototype for the bank until it provides me with a comprehensive report. The bank and I could visually identify sweet spots of their AI such as what storage tunings to use; how and when to offload vector search from GPU to CPU; whether to preload from NVMe to RAM or not; how to tune underlying storage, and so forth.

The result? I demonstrated, based on quantitative data, that the bank is good to go with cost-efficient GPU resources for AI, at the same time they achieve 5–7 seconds for a full compliance report compared to 15–20 seconds they had started from. This result became possible as the fragmented domains collided: enterprise requirements, performance analysis, and sweep analysis.

The second challenge was to “collide” AI workload with HA/DR for the sake of service continuity. I found AI and HA/DR fragmented in a way that HA/DR techniques had been drifting toward flexibility and elasticity – such as Ceph and K8S, heavily influenced by the cloud-native paradigm. I genuinely support the cloud-native approach – but we should consider the cost it comes at, such as unpredictable storage performance. It was unacceptable for AI workloads. I had to provide AI with very high and stable performance to return on investment. Here we faced another fragmentation, HA/DR and AI. I found a solution in the Linux kernel domain, namely, DRBD technology. It had been well-known in its world, but largely disconnected from HA/DR based on Ceph and K8S. In the context of AI on a cloud, however, it proved to be a sweet spot. In essence, DRBD operates very deep inside the system, at the block level of the Linux kernel. Therefore, it remains invisible both for VFS and local filesystems such as XFS or ext4. This guarantees unbiased behavior of the local filesystems under heavy AI load – in vivid contrast with global filesystems where the local filesystem is an emulated illusion, which may cause unpredictable side effects unless the application is designed and tested specifically. To put it rigorously, block-level replication implements genuine local POSIX compliance rather than emulating it. Yet another great advantage of the block-level approach is that it stores the data on very fast local NVMe drives, as close to the GPU compute cores as possible. This guarantees true bare-metal performance of reading operations – and this is precisely what prevails in AI workloads. Last but not least, this approach is free from extra complexity, requires zero extra investment, and aligns well with cloud-based backbone networks. Then I turned this sweet-spot idea to an easy-to-use product on OCI, the first of its kind. Now, users can make a few clicks and deploy a full-stack, stateful, bare-metal-performing fault-tolerant computing resource. It comes free of charge as my contribution to the open-source community. This product deploys a fault-tolerant computing environment in a few minutes, and then it guarantees service continuity of any application running on the computing resource. If the resource fails, the application behaves as if nothing happens. This shows a 10–12 second delay without interruption and without any data delayed or lost – and then it carries on as if nothing had happened. Behind the scenes, during these seconds, the service with its data takes over somewhere else in a remote location. This approach also applies to local data storage, which allows for the maximum performance of storage-based AI functionality such as RAG. This is how we provided stable bare-metal performance of the AI.

Effectively, you bring technologies to new areas where they weren’t known, to solve industrial problems more efficiently. How does this approach scale in the AI industry?

It scales perfectly, here’s the simple statistic: over 90% of AI proof-of-concepts fail. The reasons are twofold. First, the gap between the concept and miscalculated result. This is vertical fragmentation. Second, the inability to find an execution approach for the concept. This is horizontal fragmentation. Therefore, the problem matters for virtually any AI initiative in the industry. Once we learn how to connect fragmented domains, AI start-ups are more likely to deliver as promised, and there are enterprises spending on infrastructure precisely as needed, without overspending.

Getting back to our previous example, FinTech and Telco industries are rapidly adopting AI in the UK, Germany, the UAE, and Saudi Arabia these days. They all need to have AI as fast as possible and fault-tolerant at the same time to outcompete in their market, to effectively return on investment, and to scale their user audience without compromising AI performance. Now they can achieve these goals with the help of the technologies generated using this method.

What differentiates your approach from others in the field?

I set a research framework for solving IT challenges systematically and holistically, as opposed to opportunistic attempts to apply local optimisations.

Thus, the HA problem in AI eventually led me to full-stack fault-tolerance as a product, ubiquitously applicable for any data-aggressive stateful workload, whether AI-related or not.

Similarly, the problem of AI selection supplied the community with a computing engine for Cartesian spaces, equally applicable for the design of experiments, software testing, and so forth. Moreover, these days I’m developing Flex Cartesian further as I realized it can benefit from the other domains, such as quality control methods developed by Taguchi for automobile manufacturers such as Ford and Toyota, and sensitivity analysis methods such as the Morris method.

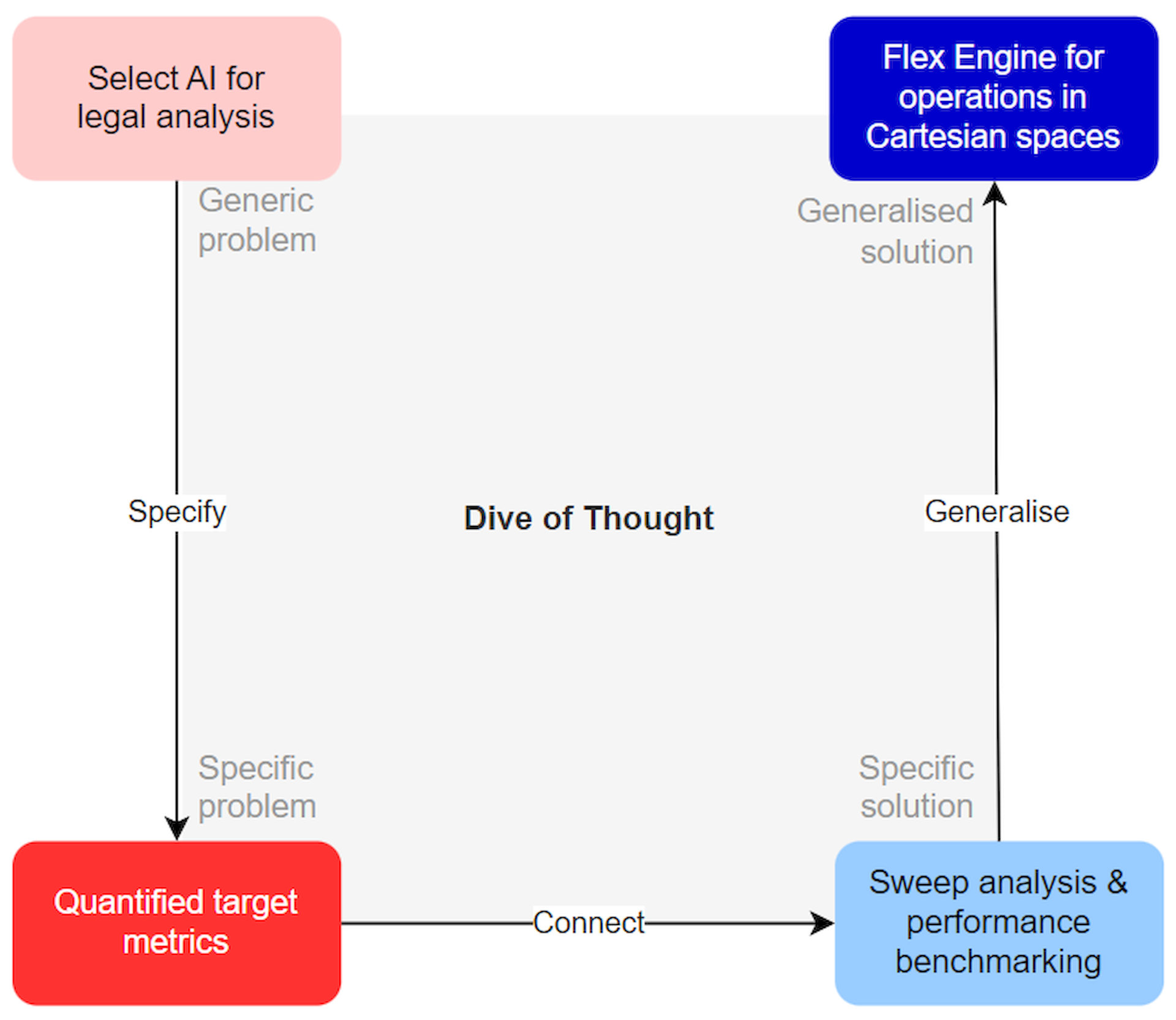

Connecting fragmented domains always generates more powerful innovation than the initial specific problem required, and this is the beauty of the approach. So the key differentiator is the systematic approach of generating new solutions I worked out over the years. It naturally blends strategic thinking with deep technical expertise in an intellectual exercise akin to diving – where a diver starts shallow, goes deeper, finds a pearl, and returns to the surface. As an example, this is precisely how I came to the concept of Flex Cartesian engine:

Connecting fragmented domains always generates more powerful innovation than the initial specific problem required, and this is the beauty of the approach. So the key differentiator is the systematic approach of generating new solutions I worked out over the years. It naturally blends strategic thinking with deep technical expertise in an intellectual exercise akin to diving – where a diver starts shallow, goes deeper, finds a pearl, and returns to the surface. As an example, this is precisely how I came to the concept of Flex Cartesian engine:

I dive into domains where I have a solid background: the Linux kernel and system technologies, AI models, data-aggressive architecture design, and distributed computing.

Looking ahead, what are your future plans?

All my work converges toward one direction: building better AI. Sweep analysis enhances AI performance. Fault-tolerance makes AI resilient. The next logical move is up the stack to enhance the architecture of AI per se. I believe current AI implementations have a practical limit in creating knowledge – not to be confused with acquiring knowledge. While I am an enthusiast of AI, I intend to challenge the status quo and explore fundamentally new approaches to AI design. Progress requires questioning conventions, and that is where my focus lies for the coming years.

VIA: DataConomy.com